Microsoft Recalled

Microsoft Recall is a dumpster fire. It should be a bigger story that it was somehow announced and shipped the way it was.

On the note of AI applications and moving fast, Joanna Stern of the WSJ writes:

Microsoft is recalling... Recall. Kinda. The company is making some changes before it releases it, including making taking screenshots of everything you do opt-in!

(Joanna Stern on X)

Joanna links to Microsoft’s announcement by Pavan Davuluri:

"Even before making Recall available to customers, we have heard a clear signal that we can make it easier for people to choose to enable Recall on their Copilot+ PC and improve privacy and security safeguards [...] With that in mind we are announcing updates that will go into effect before Recall (preview) ships to customers on June 18."

Published June 7th, 11 days before the feature is set to ship.

This is both surprising and unsurprising to me.

It’s certainly surprising — to say the least — for a brand to do a major about-face and change their ‘headlining’ feature of the new Copilot+ PC (wonderful name, by the way) both to be opt-in instead of opt out, and changed in several significant ways literal days before launch.

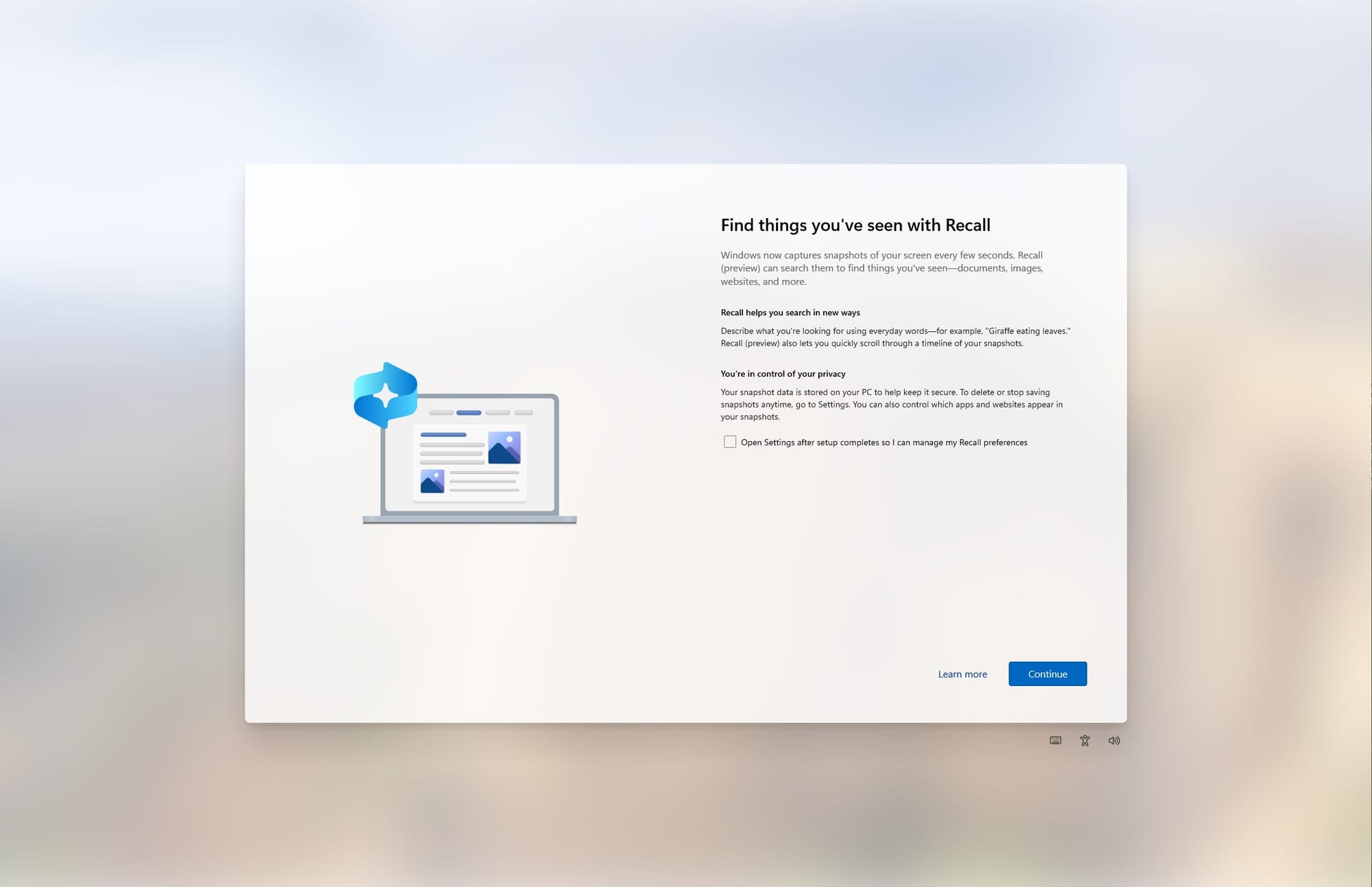

People who have tracked the feature know that it was previously what I’d like to label an ‘unlikely opt-out’ — the kind that is very much difficult to turn off during Windows setup, similar to their efforts to make it difficult to set up an installation without a Microsoft account:

It's enabled by default during setup and you can't disable it directly here. There is an option to tick "open Settings after setup completes so I can manage my Recall preferences" instead

Going from this to a fully opt-in feature shows a tremendous change of confidence in the feature. And on the note of confidence in the feature...

In an interview with Satya Nadella, again by Joanna Stern:

First off, I found it intriguing how this video went instantly viral on social media in a sort of ‘hell nah’ way. Taken Microsoft on their word (and one should, if the CEO of a 3 trillion dollar company claims something), it seemed creepy, potentially a bad idea. Chilling, perhaps, mostly because people know Microsoft as the ‘company that the boss likes’. Microsoft sells companies office and IT software and everyone isn’t all that excited about the potential for an AI powered panopticon on their work computers.

Nobody is going to put Microsoft at the top for tech companies they actively trust. It’s a bit telling of internal Microsoft culture that there wasn’t more deliberate communication around this announcement to explain to users —at length!— why they should trust this. It’s utterly bizarre to me, on par with what I observed at Facebook in its peak days when internal culture was so nauseatingly optimistic about the ‘Good’ they were doing that everyone was perplexed that everyday people found the company altogether creepy.

Anyway, here’s the transcript of the bit that matters:

Joanna “There could be this reaction from some people that say this is pretty creepy. Microsoft is taking screenshots* of everything I do."

Nadella: “Yeah. I mean that is why you can only do it on the edge. So you have to put two things together. This is my computer. This is my Recall. And it’s all being done locally. [...] Recall works as a magical thing, because I can trust it."

At the Microsoft Windows Copilot+ PC event: (again, great name, rolls off the tongue)

“The deep integration also allows an incredibly robust approach to privacy and security.”

(from the announcement event, link to timestamp in video)

Interestingly, I went back to these videos and announcements, because I could swear they mentioned something about data security. Perhaps I am just too used to Apple which seems to fret a lot about telling users that things are kept on a secure chip and such, but looking back there was actually no such commitment. One would perhaps, assume that it would naturally be encrypted on device. Surely?

That brings me to the unsurprising part of suddenly taking a U-turn on your headline feature a week or two from launch: Recall is a disaster. It’s an absolute dumpster fire in terms of security. It’s hard to imagine that any part of Microsoft’s (excellent) Security team ever signed off on this.

Kevin Beaumont, on Medium: "Stealing everything you’ve ever typed or viewed on your own Windows PC is now possible with two lines of code — inside the Copilot+ Recall disaster."

"I think it’s an interesting entirely, really optional feature with a niche initial user base that would require incredibly careful communication, cybersecurity, engineering and implementation. Copilot+ Recall doesn’t have these. The work hasn’t been done properly to package it together, clearly."

Hm, OK. Microsoft is pretty capable, surely there’s some smart work in here? As it turns out, yes — there’s a lot done to keep this truly local. But...

Q. How do you obtain the database files?

A. They’re just files in AppData, in the new CoreAIPlatform folder.

Q. But it’s highly encrypted and nobody can access them, right?!

A. Here’s a few second video of two Microsoft engineers accessing the folder

Kevin’s tweets / toots on the matter are a great read, and they got progressively more hilariously outrageous as the investigation went on. Essentially, one could argue that like all other user data, the data is encrypted. But few steps were taken to ensure the data was truly protected.

Kevin proved that local malware without admin rights can easily tap into the data. He provided proof that you can easily exfiltrate the data from another machine. Hilariously, Microsoft’s very own Copilot AI can even help you write a script on how to get data out of the Recall database.

Ars Technica got access to Recall and managed to get access to another users’ Recall database:

ArsTechnica enabled Recall on Windows 11 box and tested the claim that only you can access ‘your Recall’

— Kevin Beaumont (@GossiTheDog) June 4, 2024

By logging in as another user they could access the database and screenshots. https://t.co/sXifVsGQKa pic.twitter.com/jg0KjLPg33

"How does this even happen?” was a common response on the internet, and somehow, there’s even an answer to that: WindowsCentral reports that Microsoft kept the feature out of wider testing to keep it a secret ahead of launch.

"Microsoft also wanted to keep Windows Recall a secret so it could have a big reveal on May 20. Except, it wasn't really much of a big reveal. Many of us in the tech press already knew it was coming, even without being briefed on the feature ahead of time."

It pairs well with Microsoft’s new assertion that cyber security is its new company-wide focus “above all else”.

Unsurprising, then, that this was pulled at the last minute. But there should be a much bigger story in the press how this got to ship.

*screenshots. Words matter. Microsoft goes through great effort not to call them that. They choose to calls them ‘snapshots’ everywhere — which is a somewhat less creepy word. At the event Microsoft went as far as to claim Recall taps ‘efficiently’ into ‘the graphics compositor’. As we now know, it’s just a series of screenshots. Why the marketing words? It does sound a lot more impressive, but I suspect someone at Microsoft’s marketing department pitched the idea in a meeting. “Let’s call it snapshots. It sounds less intrusive.”

Newsletter

Sign up to get my best articles and semi-regular newsletter delivered in your inbox as they are released.

Membership

Receive my annual photography or design course, access to previous courses, my Lightroom presets, premium articles and more.